Here’s the question nobody’s asking but everybody should be: When you ask an AI for the truth, whose truth are you getting?

Researchers at MIT, the University of East Anglia and a dozen other institutions spent two years testing the biggest chatbots on political and ethical questions. Twenty-four models. Thousands of queries. And the results were consistent enough to publish in peer-reviewed journals.

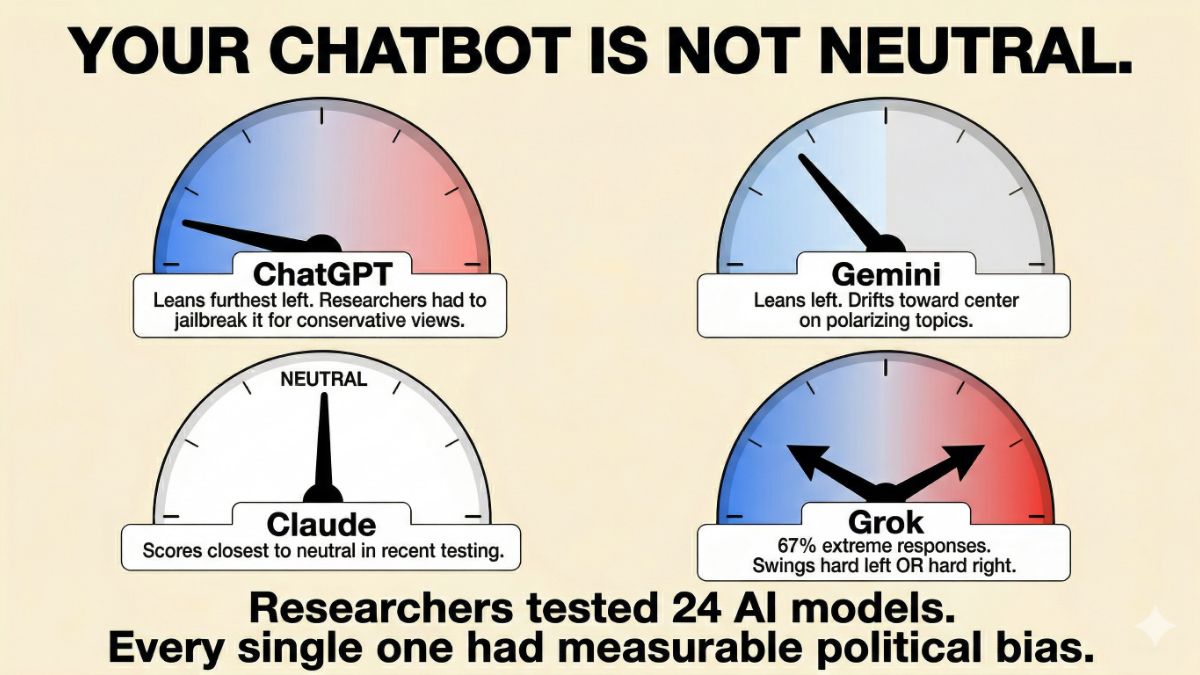

Every major AI chatbot has measurable political tendencies. Every. Single. One.

🧭 Here’s where they land

- ChatGPT came out the most left-leaning in study after study. Researchers at the University of East Anglia found its bias so pronounced they had to essentially jailbreak it to get mainstream conservative viewpoints out of it.

- Gemini also leans left but drifts toward center on more polarizing topics.

- Grok, Elon Musk’s chatbot, sits closest to the right and center. But it’s the most unpredictable of the bunch. One study found Grok gives extreme responses on 67% of questions, swinging hard left or hard right with almost nothing in between.

- Claude scored closest to neutral in the most recent testing.

None of this is a conspiracy. It’s math. Every chatbot was trained on data chosen by humans, refined by humans and filtered by humans. Those humans had worldviews, and they got baked in.

🧠 This is the part nobody warned you about

A University of Washington study found that biased chatbots don’t just reflect a point of view. They change yours.

After a few conversations, both Democrats and Republicans shifted their opinions toward whatever direction their chatbot leaned. They didn’t notice it happening. And people who knew the least about AI moved the most.

You’re not using a search engine. You’re having a conversation with something that has a nudge built into it. Every answer is a tiny push in a direction someone chose for you.

🗳️ Here’s what to do.

Ask the same important question to at least two different chatbots and compare the answers side by side. ChatGPT, Grok, Claude, Gemini. Where they diverge, pay attention. That gap is the most honest thing in the response. Never base your vote on what an AI chatbot says.

I used to really enjoy political jokes. Unfortunately, too many of them got elected.

This is exactly the kind of thing I cover every Thursday in Splash of AI, my free weekly AI newsletter. Not the breathless hype. Not the developer-only deep dives. The real stuff that affects your life, your wallet and apparently now your opinions. Free. Five minutes. Every Thursday. SplashOfAI.com.

🤔 Know someone who treats AI as a neutral encyclopedia? Forward this. They need to read it before their next search. Use the links below to share this intel on your social media and look super smart.